The AI industry's safety-first era is collapsing in real time. Steven Levy's latest commentary in Wired captures a pivotal moment: the same companies that promised responsible AI development and called for regulation are now racing to secure Pentagon contracts and build autonomous weapons systems. What was supposed to be a coordinated march toward safety guardrails has devolved into debates about killer robots, marking one of the sharpest pivots in Silicon Valley's ethical trajectory.

The promises didn't even last three years. Back when OpenAI, Anthropic, and other AI labs were calling for government oversight and pledging to prioritize safety over speed, the industry painted itself as different from Big Tech's move-fast-and-break-things era. Now, according to veteran tech journalist Steven Levy, that narrative is crumbling as defense contracts become the new gold rush.

Levy's Wired piece cuts to the uncomfortable truth: "We were promised AI regulation and a race to the top. Now, we're arguing about killer robots." The commentary arrives as Anthropic faces backlash for Pentagon partnerships and OpenAI quietly removes language from its charter about not developing autonomous weapons.

The shift represents a fundamental break from the safety-first positioning that defined 2023 and early 2024. When OpenAI CEO Sam Altman testified before Congress in May 2023, he explicitly called for AI regulation and warned about existential risks. Anthropic built its entire brand around Constitutional AI and responsible scaling policies. Google published AI Principles that prohibited weapons development.

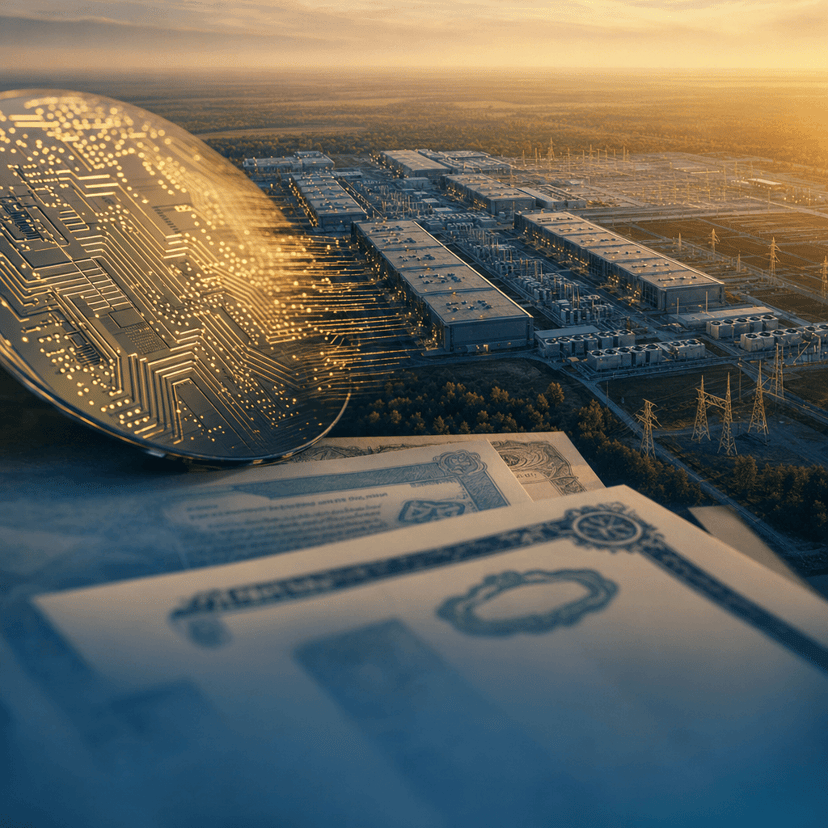

But the competitive landscape changed everything. As Microsoft poured billions into OpenAI and began integrating AI across its product suite, the pressure to monetize intensified. Defense contracts offered a lucrative revenue stream that didn't require consumer adoption curves or enterprise sales cycles. The Pentagon's budget for AI and autonomous systems has grown substantially, creating irresistible financial incentives.

Levy's critique resonates because it addresses the gap between rhetoric and reality. The same executives who signed open letters about AI risks are now pitching autonomous systems to military buyers. The companies that demanded government oversight are resisting the very regulations they once championed when those rules might limit defense applications.

The ethical questions multiplied quickly. Does Constitutional AI apply to targeting systems? Can you claim to prioritize safety while building technology designed for lethal autonomous weapons? Industry insiders who spoke to various outlets on background describe internal tensions, with safety teams increasingly sidelined as business development prioritizes government contracts.

The competitive dynamics accelerated the shift. Once one major lab started pursuing defense work, others felt compelled to follow or risk falling behind both financially and technologically. Google faced employee revolts over Project Maven in 2018, but by 2026 the calculus shifted as rivals secured Pentagon partnerships without comparable internal resistance.

What Levy captures is the speed of the transformation. This isn't a gradual evolution but a sharp pivot that caught even industry watchers off guard. The regulatory framework that AI companies ostensibly wanted never materialized in meaningful form, leaving a vacuum that military applications quickly filled. The "race to the top" on safety became a race to win defense contracts.

The implications extend beyond individual company decisions. As AI labs optimize for military use cases, research priorities shift. Safety research that doesn't serve defense applications gets deprioritized. The talent pool that joined these companies attracted by safety missions finds itself working on very different problems. The entire trajectory of AI development bends toward applications that three years ago were explicitly off limits.

For an industry that positioned itself as learning from Big Tech's mistakes, the military pivot represents a familiar pattern: initial idealism giving way to commercial pressures, ethical boundaries dissolving under competitive pressure, and the gap between public promises and private priorities widening until it becomes impossible to ignore.

Levy's commentary forces a reckoning the AI industry has tried to avoid. You can't simultaneously claim to prioritize safety and humanity's best interests while building autonomous weapons systems. The military pivot isn't just a business decision but a fundamental redefinition of what these companies are and what role AI will play in society. The question isn't whether AI labs will work with defense departments - that ship sailed - but whether any of the safety infrastructure and ethical commitments survive the transition. Right now, the answer looks increasingly like no. The race to the top became a race to arm the most sophisticated technology humanity has ever created, and the safety promises that were supposed to guide us got left behind in the dust.