Amazon Web Services just made a major play in the AI infrastructure race. The cloud giant is partnering with chip startup Cerebras Systems to combine AWS Trainium processors with Cerebras' CS-3 chips, creating what both companies claim will be the fastest AI inference solution available through Amazon Bedrock. The move signals AWS is betting big on specialized silicon to compete with Nvidia-powered alternatives as enterprises demand faster, cheaper AI deployment.

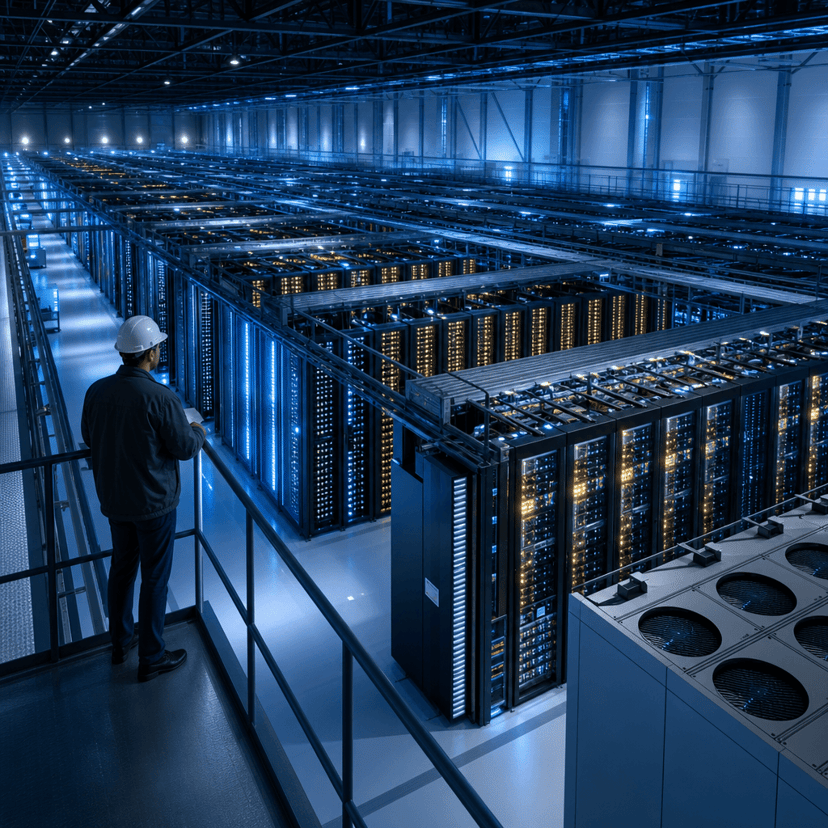

Amazon Web Services is shaking up the AI chip wars with an unexpected alliance. The company announced it's integrating Cerebras Systems' massive CS-3 processors directly into AWS data centers, creating a hybrid inference platform that combines Cerebras silicon with AWS's homegrown Trainium chips. Customers will access the combined horsepower through Amazon Bedrock, AWS's managed service for deploying foundation models.

The partnership addresses what's become the AI industry's most expensive problem - inference costs. While training giant language models grabs headlines, actually running those models at scale devours compute resources and capital. Companies deploying chatbots, code assistants, and AI agents are discovering that inference expenses can dwarf initial training budgets. AWS is betting this Cerebras collaboration will undercut Nvidia H100-based solutions on both speed and price.

Cerebras brings serious silicon credentials to the table. The company's CS-3 chip is famously massive - roughly the size of a dinner plate - and packs 900,000 AI cores onto a single wafer. That architecture contrasts sharply with traditional GPU clusters that stitch together hundreds of smaller chips. Cerebras has claimed record-breaking speeds for both training and inference, though enterprise adoption has lagged behind Nvidia's dominant position.

For AWS, this partnership represents a strategic hedge. The company has invested heavily in custom silicon through its Trainium and Inferentia chip families, but customer demand for Nvidia GPUs remains overwhelming. By incorporating Cerebras technology, AWS can offer differentiated performance claims while reducing dependence on its primary rival in AI infrastructure. According to the official announcement, the combined solution will be deployed across AWS regions and integrated natively into Bedrock's API.

The timing matters. Microsoft and Google Cloud are racing to offer the most cost-effective AI infrastructure, with pricing wars already erupting around inference workloads. Meta just open-sourced its latest Llama models, flooding the market with capable alternatives that companies can run on their own infrastructure. Every cloud provider needs a compelling answer to "why run this on your platform instead of building our own cluster?"

Inference performance hinges on three metrics - latency, throughput, and cost per token. Cerebras has previously demonstrated sub-millisecond latency for certain workloads, while AWS Trainium optimizes for high-throughput batch processing. Combining both architectures could let AWS route different inference patterns to the most efficient silicon, potentially delivering better economics than one-size-fits-all GPU solutions.

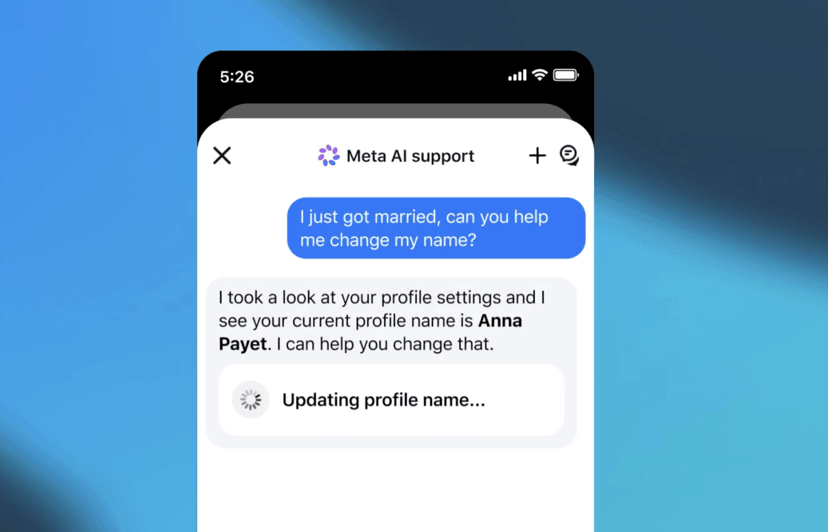

The Amazon Bedrock integration is key to adoption. Bedrock already hosts models from Anthropic, AI21 Labs, and others through a unified API. Adding Cerebras-accelerated inference means customers can potentially switch between different hardware backends without rewriting code. That flexibility matters when enterprises are still figuring out which models and architectures will dominate long-term.

But questions remain. AWS hasn't disclosed pricing for the Cerebras-enhanced service, which will determine whether this becomes a mainstream option or a premium tier for latency-sensitive workloads. The company also hasn't published independent benchmarks comparing the hybrid solution to Nvidia A100 or H100 clusters. Without hard performance data, enterprise customers may stick with proven GPU-based deployments despite potential cost savings.

Competitive pressure is mounting from unexpected directions. Groq is winning customers with its specialized inference chips, while OpenAI reportedly plans custom silicon for GPT inference. Even Tesla is building its own Dojo supercomputer for AI workloads. The era of Nvidia's unchallenged dominance appears to be fracturing into a more diverse chip ecosystem.

For Cerebras, the AWS partnership provides massive distribution it couldn't achieve alone. The startup has raised over $700 million but struggled to convert technical performance into broad market share. Getting deployed across AWS's global infrastructure instantly makes Cerebras technology accessible to millions of developers and thousands of enterprises. It's a validation that could accelerate Cerebras' long-rumored IPO plans.

The announcement lands as AI infrastructure spending reaches unprecedented levels. Companies are projected to invest over $200 billion in AI compute capacity this year, with cloud providers capturing the majority of that spend. AWS needs differentiated offerings to defend its market share against Microsoft Azure, which has leveraged its OpenAI partnership into rapid AI revenue growth.

This partnership signals a fundamental shift in AI infrastructure strategy. Rather than betting everything on homegrown chips or Nvidia partnerships, AWS is assembling a portfolio of silicon options to match different workload requirements. If the Cerebras integration delivers meaningful cost or performance advantages, it could force Microsoft and Google to pursue similar multi-vendor approaches. For enterprises, the real win is choice - the ability to optimize inference spending without vendor lock-in. Watch for benchmark data and customer adoption signals over the next quarter to see whether this becomes a market-moving alternative or remains a niche offering for specific use cases.