Microsoft just became the first hyperscale cloud provider to power on NVIDIA's next-generation Vera Rubin NVL72 systems, marking a significant infrastructure leap as enterprise AI shifts from training to inference-heavy workloads. Announced at NVIDIA GTC, the partnership expansion brings Microsoft Foundry Agent Service to general availability, adds NVIDIA Nemotron models to Azure, and deepens Physical AI integration between Microsoft Fabric and NVIDIA Omniverse. The moves signal Microsoft's bet that production AI agents and reasoning-based workloads will reshape cloud infrastructure demands.

Microsoft and NVIDIA are reshuffling the enterprise AI infrastructure deck. At NVIDIA's GTC conference, Microsoft unveiled it's become the first hyperscale cloud provider to power on NVIDIA's newest Vera Rubin NVL72 systems in its labs - a significant move as AI workloads shift from training large models to running inference-heavy, reasoning-based agents at scale.

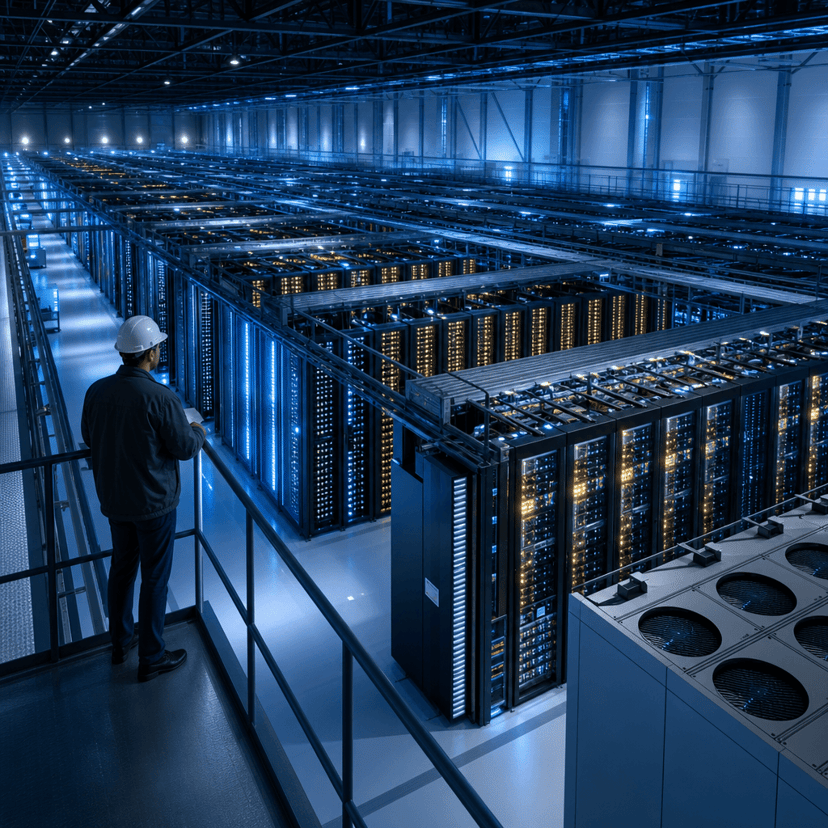

The announcement extends a years-long hardware partnership that's taken on new urgency. According to Microsoft's official blog post, the company has deployed "hundreds of thousands of liquid-cooled Grace Blackwell GPUs" across its global datacenter footprint in less than a year. Now Vera Rubin NVL72 will roll out to Azure's modern, liquid-cooled datacenters over the next few months.

That infrastructure build-out isn't just about raw compute. It's designed to support a different type of AI workload - one where agents reason, plan, and act across enterprise tools and data rather than simply responding to prompts. Microsoft is banking that this shift will require purpose-built systems optimized for inference rather than training.

Enter Microsoft Foundry Agent Service, which hit general availability today. The platform lets organizations build and operate AI agents at production scale, with Foundry Control Plane providing developers end-to-end visibility into agent behavior. Corvus Energy, a marine battery manufacturer, is already using Foundry to replace manual inspection workflows with agent-driven operational intelligence across its global fleet.

"Microsoft Foundry serves as the operating system for building, deploying and operating AI at enterprise scale," according to Yina Arenas, who leads product strategy for Microsoft Foundry. The platform brings together models, tools, data, and observability into what Microsoft positions as a single system designed specifically for production agents rather than experimental chatbots.

The agent push gets a voice boost with Voice Live API integration for Foundry Agent Service entering public preview. Developers can now build voice-first, multimodal, real-time agentic experiences - think customer service bots that actually work, not just transcribe. That pairs with expanded security integrations from Palo Alto Networks' Prisma AIRS and Zenity, addressing the enterprise trust gap that's slowed agent adoption.

But Microsoft isn't locking customers into proprietary models. NVIDIA Nemotron models are now available through Microsoft Foundry, joining what Microsoft claims is "the widest selection of models on any cloud" - including reasoning, frontier, and open models. The move follows Microsoft's recent announcement bringing Fireworks AI to Microsoft Foundry, enabling customers to fine-tune open-weight models like NVIDIA Nemotron into low-latency assets that can be distributed to edge deployments.

The infrastructure strategy extends beyond public cloud. Microsoft announced initial support for NVIDIA Vera Rubin platform on Azure Local, extending accelerated AI capabilities to customer-controlled and sovereign environments. This matters for regulated industries and governments that want next-generation AI capabilities without sending data to public clouds. The approach maintains Azure-consistent operations, governance, and security through Microsoft's unified software layer with Azure Arc and Foundry Local.

That sovereign play got a recent boost when Microsoft announced Foundry Local support for modern infrastructure and large AI models in completely disconnected environments - critical for defense and intelligence applications.

Microsoft and NVIDIA are also pushing into Physical AI, where digital intelligence meets real-world robotics and industrial systems. The companies are deepening integration between Microsoft Fabric and NVIDIA Omniverse libraries, connecting live operational data with physically accurate digital twins and simulation. In practice, manufacturers can see what's happening across physical systems, understand it in real time, and use AI to coordinate action across machines and facilities.

Microsoft introduced a public Azure Physical AI Toolchain GitHub repository integrated with NVIDIA's Physical AI Data Factory Blueprint. The toolchain connects physical assets, simulation environments, and cloud training into repeatable pipelines - moving Physical AI from research projects to production deployments in factories, energy facilities, and logistics operations.

"Microsoft Foundry serves as the platform for hosting and operating Physical AI systems on Azure at cloud scale," according to the announcement. That means customers can build, train, and operate robotics workflows that bridge simulation and real-world operations without stitching together disparate tools.

The announcements underscore how enterprise AI infrastructure is fragmenting into specialized workloads. Training foundation models still matters, but inference, reasoning, agents, and Physical AI each demand different architectural approaches. Microsoft's bet is that winning enterprise AI means supporting all of them through a unified platform layer while staying hardware-agnostic enough to ride successive GPU generations from NVIDIA and potentially other chip makers.

For NVIDIA, the partnership ensures its latest silicon reaches enterprise customers through Azure's global footprint and sovereign cloud offerings - critical as inference chips from AMD, Intel, and startups challenge NVIDIA's training dominance. For Microsoft, it's about maintaining Azure's position as the enterprise AI cloud while OpenAI partner dynamics remain complex.

The infrastructure race isn't slowing down. Microsoft's ability to deploy "hundreds of thousands" of GPUs in under a year and quickly integrate next-generation systems like Vera Rubin shows how cloud providers are building operational muscle to stay current as chip generations accelerate. That speed matters as enterprises move from experimenting with AI to running it in production across customer-facing and operational systems.

Microsoft's GTC announcements signal the enterprise AI infrastructure battle is entering a new phase focused on production workloads rather than experimental deployments. By becoming the first cloud to deploy Vera Rubin NVL72 while launching agent platforms and Physical AI tooling, Microsoft is betting that winning enterprise AI means supporting diverse workload types through unified platforms. The question is whether this integrated approach can maintain pace as AI workloads fragment and specialized competitors emerge across training, inference, agents, and edge deployments. For enterprises, the moves lower barriers to running production AI - but also lock them deeper into Microsoft's expanding AI platform stack.